Massive Data Transformation & Distribution Requirements Crushed with High Performance Middleware

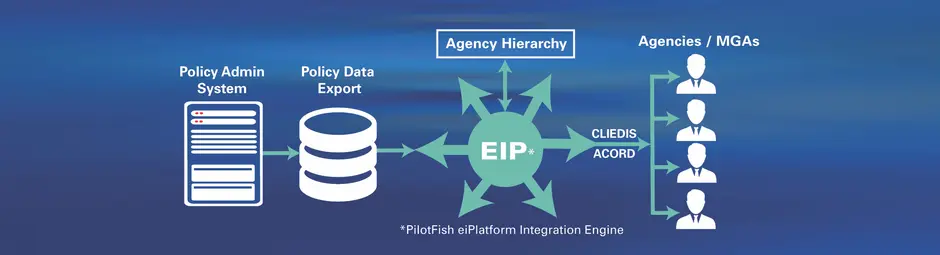

In this case study, you’ll learn how a Canadian insurance group leveraged the PilotFish Integration Engine solution to read in and parse data for electronic distribution to over 30 agencies and MGAs. In doing so, PilotFish was able to demonstrate the performance and scalability of an eiPlatform interface and deliver an affordable solution on a technology stack with plenty of room for future growth.

THE CLIENT

The Client is an international financial services holding company with interests in life insurance, health insurance, retirement and investment services, asset management and reinsurance businesses. Through its subsidiaries, it meets the financial security needs of its customers. These needs include a diverse range of products and the handling of policy claims, managing retirement and investment savings as well as providing workplace mental health support. Three of its subsidiaries have licensed PilotFish products.

THE CHALLENGE

On a nightly basis, the Client provided a fixed-width file extract containing information about all the policies in the company’s book of business. This extract contained a number of data records for each policy, including detailed information on associated Parties and Coverages. As business expanded, the number of policies in this file was expected to grow to 1-2 million, with a file size in the range of 10-20 GB.

The Client also provided a “hierarchy file”. This file contained information about the relationships between agents and various distribution channels, as well as details about those individuals and organizations. This file contained roughly 50-300 distributors and their associated agents, with the total file size expected to be approximately 15-30 GB.

When a set of policy-related records was processed, the Hierarchy File had to be used to both enrich the data stream with distributor and agent-level information as well as to direct the policy information to the correct outbound extract. Outbound CITS extracts were bound to the distributor through which the policy was sold.

Each policy (and its related parties) had to be translated into a CITS-compliant ACORD XML representation and aggregated into the associated distributor-specific file. The final file for each distributor had to represent ACORD and CITS-compliant TXLife transactions. The file then had to be named appropriately per the CITS standard, compressed via the ZIP algorithm and staged for FTP transmission to the various distributors.

The production of 50-300 outbound CITS XML files from the in-force Policy extract and associated hierarchy file was required to be handled within a 6-7 hour batch window.

THE SOLUTION

PilotFish, along with the Client, completed a major implementation demonstrating the performance and scalability of an eiPlatform interface used to generate a set of ACORD XML data feeds. Previously, PilotFish worked with the Client to implement an initial, prototypical version of the CITS In-Force interface. This exercise demonstrated the capabilities of the PilotFish IDE (the eiConsole) and how it could be used to implement an interface that would generate CITS-compliant output from smaller samples of the extracts mentioned above. While “correct” output was obtained from this prototype, results of initial scalability testing were insufficient to quell concerns over the performance of the production interface.

In the initial proof-of-concept, the interface was designed as three separate eiConsole routes. The first route was responsible for parsing the Hierarchy file and generated a fast, in-memory representation for subsequent reference. The second route was responsible for parsing and transforming individual policies from the administration system extract into individual CITS fragments. Distinct data transformations were implemented for generating Party, Holding and Relation elements of the CITS feed. The third route was responsible for combining the file-based fragments and generating the distributor-specific CITS XML files.

In the follow-on engagement, the interface was simplified to include only a single route. The data flow was as follows:

- Periodically, the Listener component polls for the Hierarchy and Policy extract files.

- The Hierarchy file is parsed into a set of Java value objects (POJOS) and is indexed by Agent and Distributor identifiers.

- The Policy File is processed through an iterative file read routing, wherein:

- Each line of the file is read into memory.

- The line of the file is converted into a corresponding XML representation.

- When all of the lines for a particular policy have been read and converted to XML:

- The agent identifier is found and used to look up the associated distribution channel in the Hierarchy index.

- All relevant agent and distributor data is appended to the XML stream.

- The complete XML fragment is added to a memory-based cache of Policy-level fragments organized by Distribution Channel.

- Each Distribution Channel cache is populated with a growing set of Policy XML documents. Once this cache reaches a configured capacity (e.g., 25 policies), the XML documents are combined into a single XCS eiPlatform transaction and fired. A transaction attribute is set to store the identifier of the associated distribution channel. A counter of sent policies is incremented by the batch size.

- Each transaction, consisting of a batch of Policies and related data in an XML form, arrive in the Transform component. Each is transformed via XSLT into an ACORD and CITS-compliant XML fragment containing Party, Relation, and Holding data.

- As CITS XML fragments, each batch arrives in the Transport component. When this component receives a fragment:

- The distribution channel identifier is acquired from a Transaction Attribute.

- An index is searched by distribution channel identifier to locate the appropriate CITS file stream.

- If such an entry is not found, a new file stream is created, including the generation of the ACORD TXLife / CITS header.

- The CITS document fragment is appended to the appropriate file stream.

- A count of received policies is updated.

- When the Listener has completed processing all lines in the Policy file:

- All remaining caches are flushed into the system.

- Final counts are updated.

- Input files are deleted.

- When the Transport identifies that the Listener has completed its work and that the “received” counter is equal to the “sent” counter:

- The ACORD TXLife / CITS footer is added to each file stream.

- Files are closed.

- ZIP versions of the files are created.

- The process completes.

THE BENEFITS

The reimplementation of this interface was conducted mainly on a developer-class laptop running Windows. In the new server environment, the full file of 1,000,000 policies was processed in approximately 20 minutes. Tests of smaller versions of the file indicate that performance is O(N) (linear). Given this, the observed rate of processing 1,000 policies/second indicates a capacity to handle more than 25,000,000 policies in the 6-7 hour batch window – far more than the number of in-force policies of even the very largest North American carriers.

Running the same interface in an affordable server infrastructure easily exceeds service level expectations more than 10 times over – leaving plenty of room for future growth and additional interface instrumentation.

Since its founding in 2001, PilotFish has been solely focused on the development of software products that enable the integration of systems, applications, equipment and devices. Billions of bits of data transverse through PilotFish software connecting virtually every kind of entity in healthcare, 90% of the top insurers, financial service companies, a wide range of manufacturers, as well as governments and their agencies. PilotFish distributes Product Licenses and delivers services directly to end users, solution providers and Value-Added Resellers across multiple industries to address a broad spectrum of integration requirements.

PilotFish will reduce your upfront investment, deliver more value and generate a higher ROI. Give us a call at 860 632 9900 or click the button.